Your MCP Server Is Ready. Your Organization Isn't.

.png)

Your MCP Server Is Ready. Your Organization Isn't.

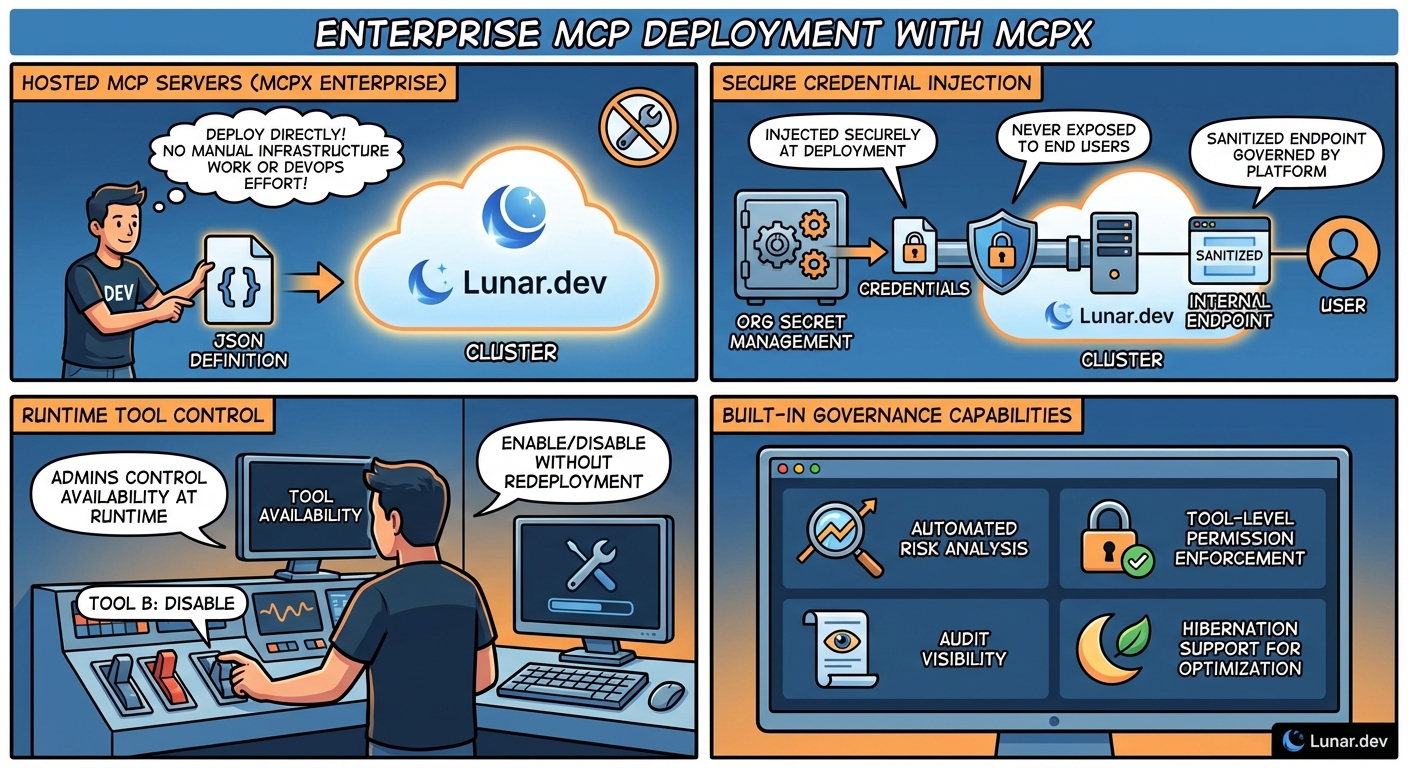

Hosted MCP Servers is a capability in MCPX Enterprise that lets teams deploy custom MCP servers from a JSON config into a managed cluster, with secrets management, tool-level controls, and automated risk analysis built in. No DevOps queue. No credential exposure.

MCP servers are everywhere. Dev teams are building custom tooling that drives real business value. The code lands quickly. Hours. Days, tops. Publishing it? That's where everything stalls. DevOps queues. Security reviews. Networking requests. Credential management. Weeks of friction that have nothing to do with the code itself.

The bottleneck isn't engineering. It's an infrastructure process.

Hosted MCP Servers eliminates that bottleneck entirely. In this post, I'll walk through the friction points we targeted and how we built around them:

- Deploy from JSON: Ship any MCP server to Lunar.dev's cluster. No infra work. No DevOps queue.

- Secrets stay secret: Credentials injected at deploy time via your vault. Never exposed to users. Never in config files.

- Runtime control: Toggle tools on or off instantly. No redeploy. No code changes.

- Governance built in: Risk analysis, permissions, audit logs, and auto-hibernation out of the box.

The shift: from infrastructure-driven deployment to policy-driven self-service, without sacrificing security or operational control.

When GitHub published its official MCP server, it made a clear promise: follow this configuration, and it works. Updates ship on their schedule. There's a team behind it. If something breaks, you know exactly where to file the issue. You copy a JSON block, point your client at the endpoint, and you're running.

This is how MCP adoption should feel. Clean, fast, and someone else's problem to maintain.

The catch is that most organizations don't live in this world for long.

The moment it gets complicated

That model has a hard coverage limit. Vendors publish servers for their own products. They do not publish servers for your internal Jira instance, your proprietary data warehouse, or the custom tooling your engineering team built during the last sprint. For any MCP server that needs to wrap internal systems, your team owns the deployment, and that is where the tax starts.

The pattern shows up in three distinct scenarios for enterprise teams:

- A developer wraps an internal API or proprietary data source in a custom MCP server and needs it running where your agents or co-pilots can reach it.

- Your organization runs an enterprise Server internally (Atlassian, for example) and wants to expose it as an MCP server inside the cluster, not by calling a public endpoint.

- An engineer is building net-new AI tooling and needs a place to deploy it without standing up new infrastructure.

The technical work takes hours. The organizational work takes weeks.

Deploying a server into an existing cluster means coordinating across a web of stakeholders: the developer who built it, DevOps who owns the cluster, security who controls access, IT who governs infrastructure, and compliance who validates policy. Sometimes more. Each team has valid concerns. Each runs on its own schedule. Each pad's estimates are based on cross-team dependencies that have a way of snowballing.

Then there's networking. If your MCP server lives in a different cluster than your AI agents, add VPC peering, firewall rules, and load balancer configs to the list. In enterprise environments, each is a separate approval, a separate queue, a separate delay. This isn't a technical limitation. It's organizational friction that scales with every server you ship.

What eliminating this tax actually requires

The bottleneck isn't velocity; it's the overhead that kills it. A real solution doesn't just move faster; it eliminates the friction that DevOps, security, and compliance create in the first place.

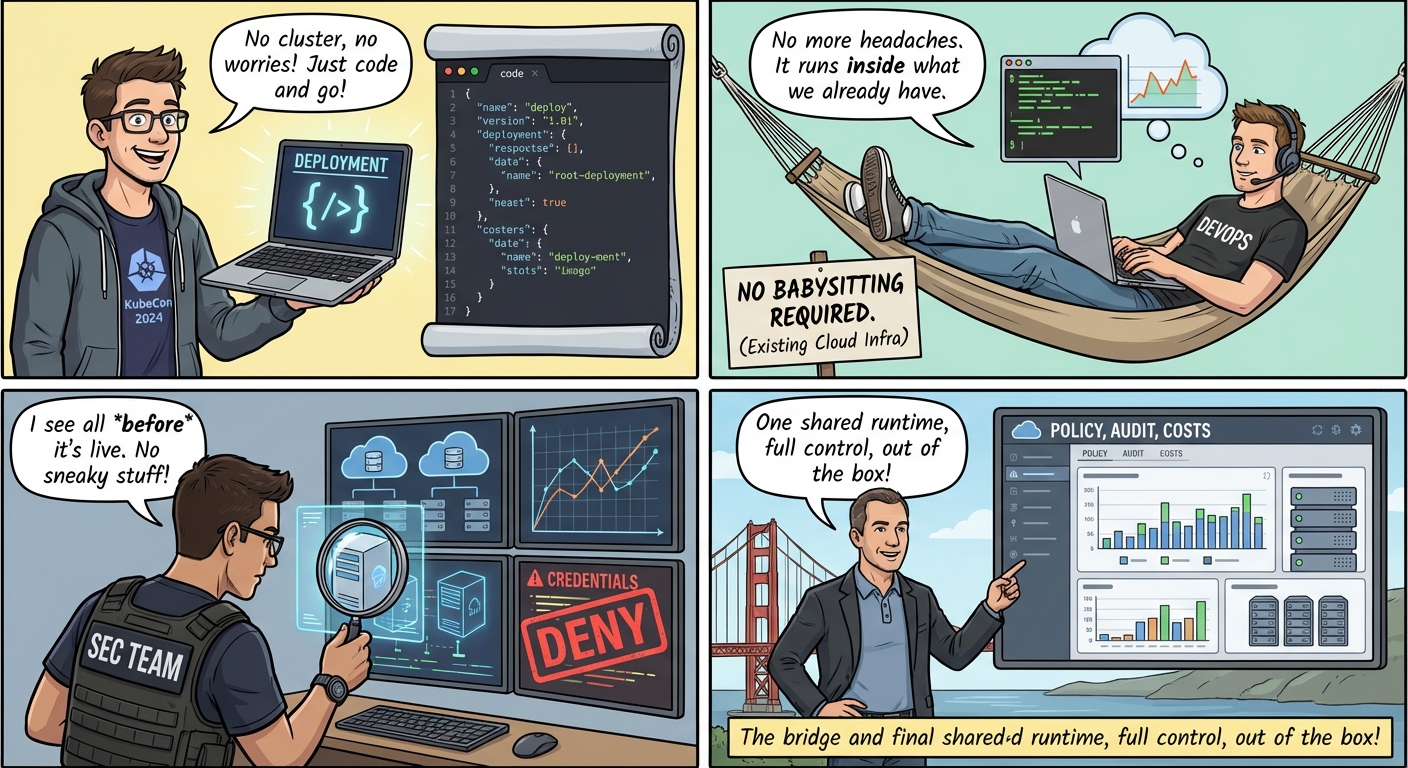

For developers:Deploy from a standard JSON definition, the same schema every public MCP server uses. No cluster access. No deep infrastructure knowledge needed. Just ship.

For security:Full visibility into what each server exposes before it goes live. Tool-level controls without touching code. Credentials that never reach end users or land in config files.

For DevOps:No new cluster to babysit. No cross-cluster networking headaches. No manual instance wrangling. The server runs inside the infrastructure you already manage.

For the organization:One runtime, shared across teams, with policy-based access, built-in audit trails, and cost controls that ship out of the box.

Solving for all four at once isn't a configuration problem. It requires an infrastructure layer that works across all teams, and not between them.

How the public MCP ecosystem models this (and where it stops)

The public MCP ecosystem has made real progress. Vendors like GitHub, Notion, and Atlassian now publish MCP servers with standardized schemas. The model is straightforward: reference, authenticate, deploy. No operational overhead. No cluster management. No firefighting. No 3 AM pages.

The limitation is structural. Public servers only cover what vendors prioritize. Internal tools, custom integrations, proprietary systems. The infrastructure that defines your competitive advantage sits outside that boundary.

For most enterprise organizations, this isn't a gap at the margins. Internal tooling represents the majority of MCP surface area. The public ecosystem delivers a starting point. It doesn't deliver a platform.

That's where Lunar.dev's Hosted MCP Servers come in.

How Lunar.dev Simplifies Hosting MCP Servers:

Hosted MCP Servers is a core capability in MCPX Enterprise. The model is simple: config in, infrastructure overhead out.

Developers package their server (Docker, NPX, or UVX) and provide a standard MCP JSON configuration. An admin uploads the config to the MCPX panel. Lunar.dev provisions the instance inside the cluster. No DevOps ticket. No cross-cluster networking. No developer access to infrastructure. The server runs on the same cluster as MCPX, instantly reachable by any authorized agent or client.

From there, Lunar.dev manages the runtime: version tracking, health status, CPU/memory utilization, last activity, and centralized logs. All without cluster access. Each instance gets a dedicated internal endpoint.

5 key benefits to host your MCP Servers in Lunar.dev:

- Secrets management

A typical Slack MCP config tells the story: secrets aren't abstracted, they're inline. API keys, tokens, credentials, hardcoded and visible to anyone with file access.

{

"slack": {

"command": "npx",

"args": [

"-y",

"@modelcontextprotocol/server-slack"

],

"env": {

"SLACK_BOT_TOKEN": "xoxb-XXX-XXXX-XXXX-XXX",

"SLACK_TEAM_ID": "YYYYYYYYYYY",

"SLACK_CHANNEL_IDS": "AAAAAAAAA, BBBBBBBB, CCCCCCCC"

}

}

}That config travels everywhere: tickets, onboarding docs, messages. So do the credentials embedded in it.

Hosted MCP Server changes the model:

- MCPX never stores or manages secrets, only references them

- DevOps defines secrets in your existing manager (AWS Secrets Manager, Vault, etc.) and injects them via Helm as Kubernetes secrets

- Admins reference secret names at deploy time, never raw values

- Env vars are injected once, establish the connection, and are never logged or persisted

After deployment, users connect to the instance through an internal cluster endpoint. No public exposure. No credentials in transit. The server and its configuration remain fully isolated.

Here's what that same Slack MCP server looks like when hosted:

{

"slack": {

"url": "http://mcpx-019af856-9c1c-77c1-a78b-cd3f3d4ca6c3:9000/mcp",

"type": "streamable-http",

"headers": {}

}

}This matters more than it sounds. In early MCP adoptions, credential exposure is a real and recurring problem: tokens shared in Slack threads, config files passed around, screenshots taken without a second thought. Encapsulation eliminates this risk entirely. Not by demanding better discipline from your team, but by making exposure structurally impossible.

2. Tool-level control

Every deployed server exposes a structured manifest: tools, parameters, and required permissions. Admins enable or disable individual tools without code changes. A server with both read and write database access? Approve the reads, disable the writes, same session, no developer involvement.

No more deploy-review-change-redeploy loops. Security restrictions at approval time, not after.

For teams thinking about how tool exposure affects agent performance and security at runtime, see Why Dynamic Tool Discovery Solves the Context Management Problem.

3. Automated risk analysis

When a Hosted MCP Server is created, every tool is automatically analyzed: parameter types, token scopes, permissions, and risk, powered by your own LLM. Security gets a complete capability view before users can connect. No manual inspection. Every server. Every deployment. For a full breakdown of the MCP attack vectors this addresses, see MCP Risk Analysis.

4. Instance management and hibernation

Instances run in the Lunar cluster, hibernate when idle, and wake on demand. Admins see version, health, CPU/memory, and last activity. Costs track actual usage. No manual management required.

5. Shared access with policy control

A single hosted instance serves many users in the organization. Admins control access based on permissions assigned in MCPX. The developer does not manage multiple deployments or coordinate access. One instance, one internal address, access governed by company policy.

What This Means for Enterprise MCP Adoption

Hosted MCP Servers removes the coordination overhead that slows enterprise MCP adoption. With the ongoing demand of developers building MCP Servers, developers are now able to ship servers without the high-level infrastructure skills and permissions. The security team enjoys automatic risk analysis and tool-level classification on every deployment, before anything goes live. DevOps manages nothing. The organization enables a governed instance without fragmented deployments. At Lunar.dev, we have created the infrastructure layer so your teams can focus on building the agentic layer without the operational tax.

Availability

Hosted MCP Server is available in MCPX Enterprise. If your organization is running MCPX and you want to understand deployment requirements and access configuration, contact our team or book a demo.

For teams evaluating MCPX for enterprise deployment, the MCPX product page and [MCPX documentation] cover the full capability set.

Ready to Start your journey?

Govern all agentic traffic in real time with enterprise-grade security and control. Deploy safely on-prem, in your VPC, or hybrid cloud.

.png)

FAQ

What is a hosted MCP server?A hosted MCP server is a custom MCP server deployed into a managed cluster from a JSON config, where the platform handles secrets, networking, and runtime. Lunar.dev's MCPX provisions instances inside your existing cluster with no DevOps effort or cross-team coordination.

How is a hosted MCP server different from self-hosted or vendor-published?

Vendor-published servers cover only that vendor's product. Self-hosted servers require weeks of DevOps and security approvals. Lunar.dev's MCPX eliminates that overhead while developers package and own their server in Docker, NPX, or UVX.

How does a hosted MCP server handle secrets?In MCPX, credentials are never stored in config files or exposed to users. Secrets live in your existing vault (AWS Secrets Manager, HashiCorp Vault) and are injected at deploy time as Kubernetes secrets.

%20(1).png)

.jpg)

%20(1).jpg)